Boomi Consumption

Are you embarking on a Boomi consumption project but don’t know how to model your messages? Do you want to make sure you are using your messages efficiently so you can get more bang for your buck? Then this blog post is for you.

This advice comes from a 9-year Boomi veteran who’s recently done his first consumption project and learned some great lessons. These are all learnings we’ll be applying in future consumption projects to ensure we can more accurately estimate the costs, minimise consumption and keep track of the usage earlier in the project. This is especially useful for Senior Boomi Engineers who have only ever worked on Connector licensed projects.

Here we’ll cover some of the factors that need to be considered when embarking on a consumption project.

Estimating Consumption

When embarking on a new consumption project, one of the first things that needs to be determined is what the message consumption will be. Whereas connector licensing allows you to know upfront what the costs will be, consumption is directly correlated to numerous factors:

-

the number of integrations being developed

-

the frequency of executions (e.g. how many times a day is a schedule run, or how frequently is an API called)

-

the number of environments (e.g. will you be running these integrations in dev/sit/test/prod)

-

consumption due to other products (e.g. DataHub, Flow etc)

Unfortunately, no tool exists to help with estimating your consumption, however the following approach is a good place to start.

Calculating Integration Min/Max Costs

First, a couple of definitions:

-

Cost – how many messages will be consumed.

-

Base Cost – this is the connector calls that will always happen, regardless of whether they return data. An example would be a schedule querying a database for changed records, which may or may not return results. Also don’t forget logging connector calls which may be called at the end of a process.

-

Dynamic Cost – this is the connector calls that occur depending on the results of previous connector calls. An example would be the database connector call from the base consumption returning results, which in turn need to be written to an API.

With those defined, your spreadsheet should include the following:

-

List all integrations in scope

-

Keep Schedules/APIM APIs/Events separate from each other

-

For Schedules

-

Include the Base Cost

-

Estimate the Dynamic Cost

-

Add columns for Min Cost (being Base Cost) and Max Cost (Base Cost+Dynamic Cost)

-

-

For APIM APIs

-

Include the API Cost, that being the API call of 1

-

Include the Base Cost

-

Estimate the Dynamic Cost

-

Add columns for Min Cost (being API Cost + Base Cost) and Max Cost (API Cost+Base Cost+Dynamic Cost)

-

-

For Event Streams Events

-

Include the Event Cost, but divide by 100 (as 100 Events = 1 Platform Message)

-

Include the Base Cost

-

Estimate the Dynamic Cost

-

Add columns for Min Cost (being Event Cost + Base Cost) and Max Cost (Event Cost+Base Cost+Dynamic Cost)

-

-

For all, add an assumptions column and review with appropriate stakeholders.

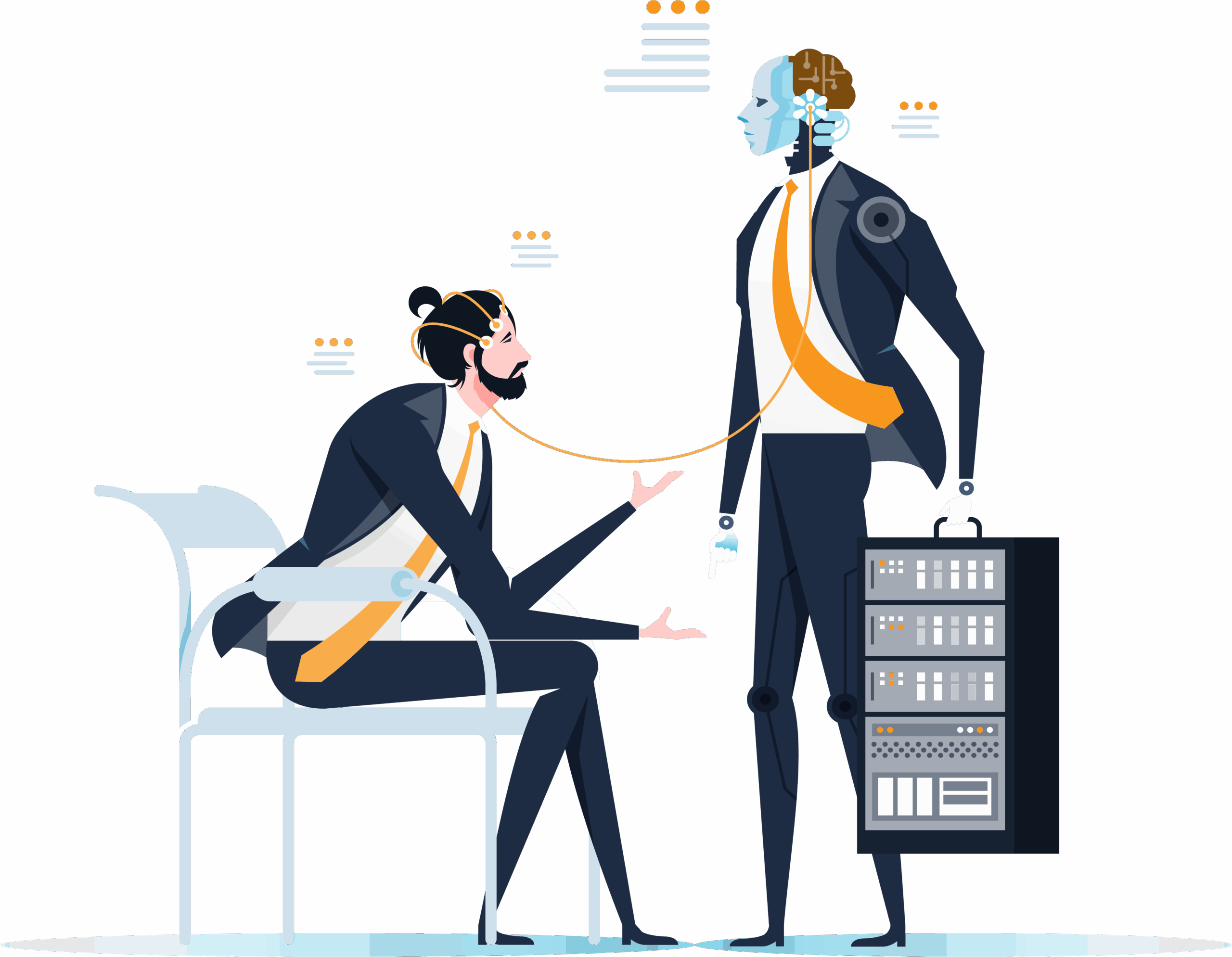

So, an example might look like this:

Image 1: Example Cost Calculations

Now, when calculating the base cost/dynamic costs, don’t forget to factor in the following:

-

JWT tokens – if using the REST client and you need to retrieve a JWT token first

-

Pagination – don’t forget that each connector call is a message, so calling the same connector to retrieve 5 pages of results would be 5 messages

-

Logging – this can often be missed, especially if you log success events for every execution

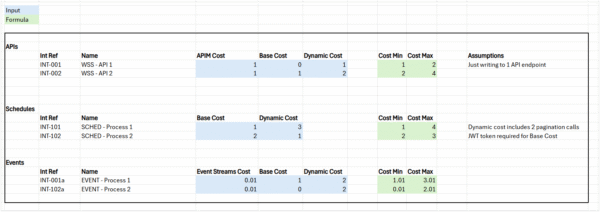

Calculating Monthly Costs

Next, using these costs, you want to determine the cost per month. This uses the min/max values derived above, then applies the frequency of executions for each integration to come to the total monthly cost.

Using the APIs/Schedules/Events documented above, start a new sheet which contains the Int Ref (maybe the Name) and the min/max costs (vlookup is useful here).

-

APIs

-

Ideally you’ll have an estimate of API calls per month – use these values, then the min/max cost columns are simply multiplied by the min/max cost.

-

-

Schedules

-

Are a bit trickier, as the executions per month is a function of the frequency, hours of operation and days per month. The executions per day is simply the (60/Frequency)*hours of operation; the executions per month is the executions per day*days per month.

-

-

Events

-

Again can be tricky depending on how accurate you want to be. Events would usually be spawned from APIs or Schedules, but Schedules won’t always spawn events (e.g. same logic that is used to determine dynamic cost – not every schedule execution will result in an event). As such, it may be simpler to treat these the same as APIs and estimate the events per month.

-

Image 2: Example Monthly Cost Calculations

Then finally you simply total up the Min / Max Costs to get the per integration and total costs.

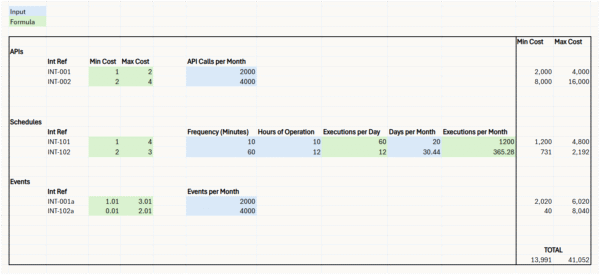

Per Environment Costs

The final step is to calculate the consumption for all environments. It’s easy to just focus on what the costs will be once you are live, but during the SDLC, you will likely be running at least 2-3 environments concurrently. After going live, you’ll still likely be running at least one or two non-prod environments during hypercare, albeit at a reduced rate.

There are probably various ways of determining the final costs, but below is a simple % loading approach. This involves rows for each environment, one for % loading and one calculation that takes the Total Max Cost from above and multiplies by the loading. This can provide you the estimated monthly cost throughout the whole SDLC, the ongoing monthly costs, and the estimated yearly cost.

Image 3: Per Environment Calculations

Other Costs

As mentioned above, there are other services that also consume messages. Here we’ve just covered Integrate, APIM and Event Streams, so you’ll need to factor in cost from DataHub, Data Integration, Control Plane etc. Boomi Data Integration (Rivery) and AI are particularly expensive relative to Integrate, at a cost of 50 and 100 messages respectively.

DataHub Project Tips

Tip #1: Estimate and Manage Golden Record Consumption

DataHub projects introduce their own complexity to keep in mind. The obvious costs to keep in mind is the Golden Record consumption. 1 Golden Record consumes 1 message per month, so 12 messages per year.

Unlike Integrate there’s no Environments per se, although you’re probably creating 3 Repos, one for Dev, Test and Prod. As Integrate includes consumption across all Environments, DataHub also includes consumption across all Repositories.

Apart from including these in your estimates, make sure that you clear out any non-prod repos of Golden Records that are no longer being used. E.g. if you’re testing your Initial Load, make sure you clear the Repo after testing, as every day GRs exist counts towards your consumption limit. This may not be important if you’re well within your allocated tier, but if you’re getting close to exceeding the tier then clear them out.

Tip #2: Minimise and Track Initial Load Consumption

The other way consumption can sneak up on you is with your Initial Load testing. If you’ve not done a DataHub project, this refers to the integrations used to bulk load data from your source systems into DataHub, as well as syncing this data to downstream systems. By their very nature they can consume a lot of messages, especially if each Golden Record requires multiple connector calls to sync one record. On top of this, you’re probably doing multiple test runs from the initial development testing, to populating different Repos, for performance testing, before finally getting to the Production load.

There’s not much that can be done to minimise this consumption, apart from avoiding testing the full load until ready to do full performance testing. As such, factoring this into your estimates and tracking your consumption is important so there’s no surprises when viewing your usage.

Tracking Tips

As just mentioned, it’s important to track your consumption, both throughout the SDLC and once you’ve gone live. There are two ways to achieve this:

Tip #3: Utilise the Licensing and Usage Screen

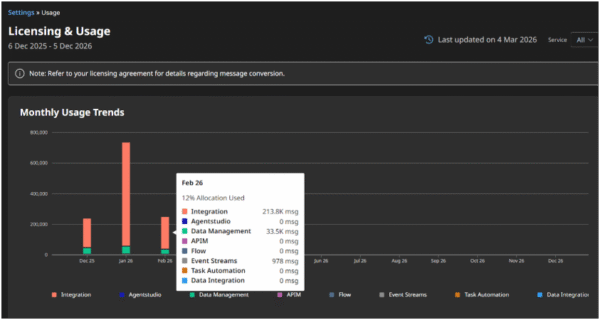

Boomi provides a graphical representation of your consumption under the Licensing and Usage screen (available under settings). This gives a monthly breakdown of your consumption across all services.

You can also see the previous year’s usage by selecting a specific service and then checking Previous Year.

Image 4: Licensing & usage screen

This is a great way to get a quick view of your consumption per service, including an estimate of if/when you’ll exceed your current tier. However it doesn’t provide any more granularity than per month, nor does it show you a breakdown at the Integration level.

Tip #4: Build your own tracking using the Boomi Platform APIs

To achieve this granular reporting, you can use the Platform API ExecutionRecord and ExecutionSummaryRecord APIs to get all the detail you want.

As usage data is only stored for up to 30 days by Boomi, this does require building a process in Integrate that is run daily to fetch the incremental usage data and to store in a service like Blob Storage or a Database. You can use excel (import data and do a pivot table) or even PowerBI to generate your own reports.

This is obviously a more complex exercise, but you get granularity down to the individual process executions which will provide all the detail you could require.

Other Tips

There are a few other recommendations that can help with both estimating and reducing consumption.

Tip #5: Confirm API calls early

Your estimation is only as good as what you know, particularly when it comes to connector calls. If you can find out early in the project all the APIs you’ll be hitting, this will make your estimates more accurate. For example, it’s not uncommon to initially think an integration will just need to hit one or two APIs, then later on you find it’s more than this. This also has the advantage of saving rework later on.

Tip #6: Use Batch Calls

Many APIs provide a batch mechanism for sending data. DataHub is a great example where using the REST client to hit the batch endpoint is more efficient than using the DataHub connector. This is because the Connector only allows for individual golden records to be updated, whereas the REST API allows you to send batches of up to 200. Keep in mind, this will likely require a JWT token API call as well, so you want to ensure that you’re getting at least 3 or 4 Golden Record changes per execution to make it worthwhile.

Tip #7: Refine your Estimates

As previously mentioned, estimates are just that, and are likely to change. It’s important to ensure all stakeholders understand this, and that as you proceed through the project you are updating your estimates with new discoveries and advising the team.

Tip #8: Consider Changes Carefully

When being asked to add new features or functionality to an integration, keep consumption in mind. E.g. Sometimes you may need to make new connector calls to achieve a business requirement. It’s important to always advise the stakeholders of any increase in consumption that may result.

Conclusion

Boomi’s consumption model offers tremendous flexibility, but that flexibility comes with a responsibility to plan, estimate, and monitor consumption far more actively than in traditional connector‑licensed projects. As covered above, consumption is influenced by many moving parts – integration design, execution frequency, environment usage, and even seemingly small details like pagination or logging.

Success with consumption isn’t about guessing perfectly on day one; it’s about building a disciplined approach to estimation, validating assumptions early, and continuously refining your numbers as the project evolves. The more you understand your APIs, data volumes, and integration patterns upfront, the more predictable your costs become.

Equally important is ongoing visibility. Leveraging Boomi’s Licensing & Usage dashboard gives you a high‑level view, while Platform APIs allow you to build granular reporting that keeps surprises at bay. Combined with thoughtful design choices – such as batching, minimising unnecessary connector calls, and managing DataHub repositories carefully – teams can keep consumption efficient without compromising functionality.

Ultimately, this kind of planning and tracking rewards teams who treat consumption as a first‑class design consideration. With the right estimation framework, proactive communication with stakeholders, and continuous tracking throughout the SDLC, organisations can take full advantage of the consumption model while maintaining control over cost and performance.

If you want to get consumption right from the start, Adaptiv brings the structure and hard-won experience to make it happen.