3D televisions had their moment. Smartphones changed the world. The difference was public perception and adoption; only the latter survived and became an everyday cant-live-without item. The technology landscape has never stood still. The industry never knows what’s around the corner. In the same way, API-led Connectivity has made its mark by allowing integrations to become interoperable and more easily extendable. It allows business to build reliable integrations faster with options to add more functionality effortlessly. It’s the smartphone of integration!

API-led Connectivity is an established way of maintaining and connecting integrations in a sense where everything is decoupled and reusable.

No longer is there a need to have separate point to point integrations for each system, even when the data is similar in nature but incompatible. Using API-led Connectivity allows you to use the same components to encourage reusability, which means that less work is required when adding new systems into the mix. All these benefits will save your organisation time and money by increasing productivity, reducing duplicated code and greatly reducing time to market.

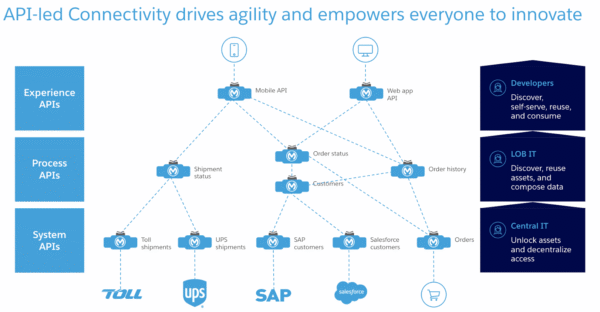

There are 3 layers when it comes to API-led connectivity, the experience layer, process layer, and the system layer, which I will explain in further detail below.

API-led Connectivity

API-led Connectivity has already been a concept for several years now and was originally developed by MuleSoft with Ross Mason as the lead. Although it was designed for MuleSoft integrations, the principle can be applied to other integration platforms as well.

API-led connectivity is already starting to prove invaluable for businesses that have large eco systems, no longer do they need to have thousands of fragile integration components; instead, they can invest in decoupled and modular integration components to improve data interoperability, which make it a lot easier to add new components, adding the ability to ingest data to improve visibility of their customers and no longer miss new opportunities which provides an immense amount of value.

There are two schools of thought with API-led Connectivity, the traditional 3-layered approach (contains experience APIs, process APIs, and system APIs) which has been the de facto way to achieve this, or you can take a balanced approach and take advantage of cutting-edge technologies to help reduce API costs while achieving the same goal.

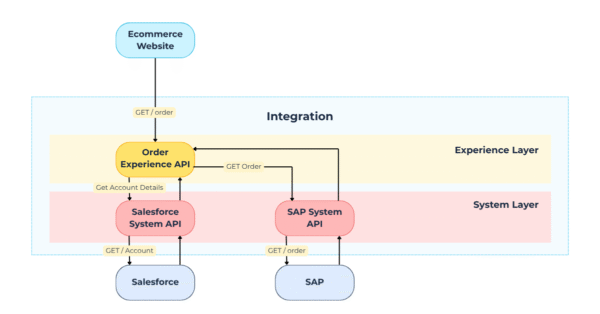

Figure 1: Typography of different APIs working together in an API-led connectivity enabled system

The Traditional 3-layer Approach

Experience Layer

This layer is the front facing entry to your integration, the primary function of this layer is to receive the data and transform into a canonical structure which is necessary to make the data more useful and consistent, especially if it is coming from a system with limited outbound data flexibility. The experience layer contains APIs that outside resources will interact with and is designed to abstract system resources away from clients or public consumers that are outside of the MuleSoft ecosystem. Due to the outbound data constraint mentioned above, each source system may require separate experience APIs.

Common Scenarios

- Application sends data via REST to the experience API, API Transforms the inbound data into a canonical format and publishes as an event to a queue for further processing upstream.

- Request data from upstream APIs e.g booking information or an order, transform the data into another format if necessary or leave it in its canonical format.

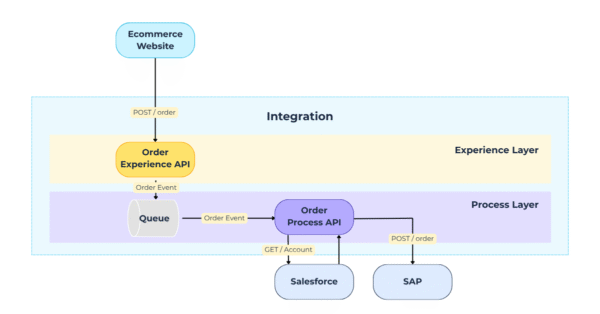

Process Layer

This layer is the main heart of API led integrations; all events flow through the process layer and are orchestrated to be sent to multiple system APIs that are interested in processing the event. An event may not have all the information necessary to complete the full payload; for this reason, the process layer is responsible for fetching further information or performing additional calculations to enrich the data to make it more useful.

Common Scenarios

- Purchase Order events originating from a commerce website are received, the process API will retrieve a credit rating from Salesforce and then is sent to NetSuite, then any enriched data is sent back to the commerce website and Salesforce. Additionally, the event could be sent to an analytics system API that also sends the data to a data warehouse.

- A scheduler runs periodically (say once per hour) and makes a request to a system API to retrieve data from the database or retrieves files from an SFTP site, then the data is sent to the commerce website. This scenario would be more appropriate to integrate legacy systems or anything that is not time sensitive (e.g. updated products to be published on an ecommerce website).

System Layer

This layer interfaces directly with a specific system such as SAP or Salesforce, data that is received from process APIs are processed and transformed, then the payload is sent to the System via its exposed APIs, queues, or etc.

Common Scenarios

- An order has been received from the process API and is transformed into a system compatible data schema and is sent to NetSuite, the system API then extracts some values from the response payload and returns it back to the process API.

- A consumer makes a request to retrieve a customer account balance via the experience API, the experience API makes a request to the system API, the system API makes a request to Salesforce. The system API maps the response payload into a canonical structure and returns to the experience API, the experience API returns the canonical payload back to the consumer.

The Cost Optimised Approach

While the traditional 3-layer approach would suit most use cases and is the preferred method to API-led Connectivity. However, there are other scenarios that might not benefit from the 3 layered APIs due to the consumption cost of extra flows but would still benefit from using the underlying concept. API-led connectivity treats integrations as modular building blocks, enabling expandable, decoupled and flexible design. This approach ensures APIs are not tied to single use cases but can be easily extended — reducing development time and cost while maintaining reliability and resilience.

This evolution provides an alternative solution aimed at reducing API consumption costs. Historically, many software providers, including MuleSoft, licensed their platform based on blocks of resources used, such as CPU and memory. Modern cloud platforms have introduced consumption-based licensing models, based on number of APIs, transactions and data throughput. This shifts the focus from maximising the load on allocated resources to optimising the number of API calls and flows within an integration.

The more APIs that are being called in an integration, the greater the cost can be, so it makes a lot more sense to reduce some of those API calls but in a way that wouldn’t reduce your integrations ability to adapt more functionality and include more systems.

With event driven platforms such as Solace or Azure Service Bus getting more sophisticated, they can route messages based on specific conditions in the event. This can reduce the need for a process API in some cases where data enrichment is not required or the system APIs when end-systems have well defined API resources and are secured with high security practises like OAuth 2.0, the process API could map the data into the required structure and send it directly to the destination system. There may be scenarios where the source system can pre map the data into a well-defined canonical structure and publish the event directly to an event queue ready for the process API to subscribe to it.

Most integrations that follow API-led Connectivity principles will likely still have APIs in each layer due to different source/end systems offering different functionality. It is good to note that eliminating layers in an integration is not ideal but might be necessary to save cost on consumption licensing. Eliminating layers usually always comes with disadvantages, as stated in the scenarios below. Always carefully review different design considerations before eliminating an API layer.

Scenarios

Each of the following scenarios provide different ways that a layer can be excluded along with any disadvantages. We could assume that the only real advantage is to reduce the consumption cost of flows, however, it may also reduce some latency but is most likely negligible.

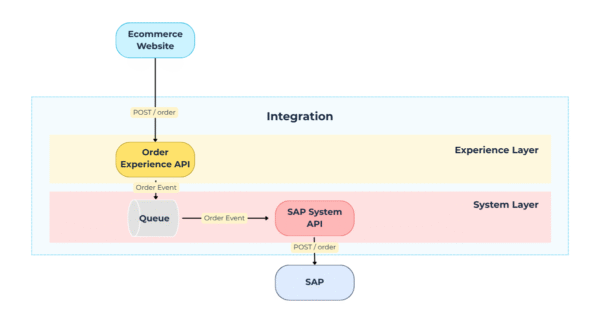

Process/System Layer

The source system can map events and send them directly to the event driven platform in use (e.g. Solace Topic, Azure Service Bus Topic etc), not only would this eliminate the experience API, but it would also streamline the integration by reducing API flows. The process API could digest the event and process it.

Figure 2: Typography eliminating experience layer in favour of queues

Figure 2: Typography eliminating experience layer in favour of queues

Disadvantages

- You are placing responsibility on the source system to map the event using a canonical model, instead of performing in the experience API layer.

- Most systems cannot get outbound REST webhooks right, let alone publishing events to a queue.

You could also expose synchronise endpoints on the process API directly. The source system would send REST requests directly to the process API to be orchestrated and return the results back.

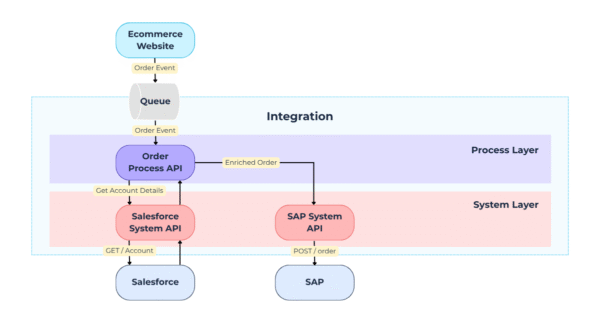

Figure 3: Typography eliminating experience layer and exposing REST resources via process API

Figure 3: Typography eliminating experience layer and exposing REST resources via process API

Disadvantages

- A queue could be added in the process layer but would either increase the flow count(s) or add further complexity e.g. one queue for everything, filter after consumed.

- Not suitable for sending data when latency is a concern and guaranteed delivery is required as it is not event driven enabled.

Experience/System Layer

There are scenarios where data orchestration is simply not required, this is possible when the source for the data is the master, meaning it is not possible to enrich the data any further.

Figure 4: Typography eliminating process layer in event driven enabled system

Figure 4: Typography eliminating process layer in event driven enabled system

Disadvantages

- It limits ability to perform data enrichment later, potentially adding further development time. (Could be bad practice adding enrichment on the System layer).

- No Process API to handle reply back event if the source system requires data from the end-system.

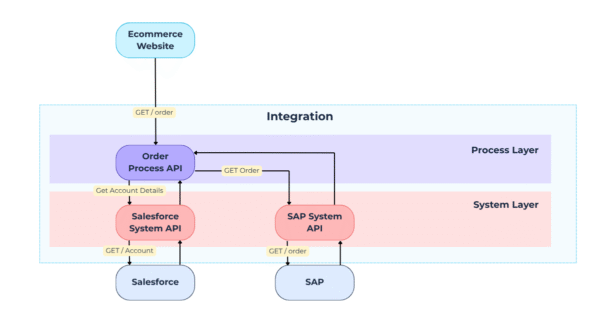

The process API could also be eliminated when there are synchronous requests originating from the experience layer, the experience API can call the system API directly.

Figure 5: Typography eliminating process layer in system using only synchronise REST APIs

Figure 5: Typography eliminating process layer in system using only synchronise REST APIs

Disadvantages

- It limits ability to perform data enrichment later, potentially adding further development time. (Could be bad practice adding enrichment on the System layer).

- Not suitable for sending data when latency is a concern and guaranteed delivery is required as it is not event driven enabled.

Experience/Process Layer

There are end systems that are highly customisable with well-implemented REST principles and allow you to establish logic within to accept canonical data and map it directly internally. These systems also offer secure OAuth 2.0 security. Basically, the end system can act as a system API.

Figure 6: Typography eliminating system layer in event driven enabled system

Figure 6: Typography eliminating system layer in event driven enabled system

Disadvantages

- Mapping to end-system via integration adds more complexity in the process API.

- Mapping via end-system internal flows, if it has that capability, moves part of the integration responsibility to the end-system developers.

- Code duplication can exist when different process APIs need to access the same system resources.

In hindsight, this doesn’t mean that the 3 layered approach is now obsolete, there are still plenty of valid reasons to use and is preferable but a cost saving approach might fit better with the needs of the business if cost is a concern. However, if the design is still modular and easily expanded without impacting performance, then it is still within the bounds of following API-led connectivity principles.

Conclusion

Applying API-led connectivity practices to your integration doesn’t mean it has to be done a specific way but does mean it should be built in such a way where all the components are completely decoupled and can be extended with minimal effort. They should be able to be developed so that many new systems can be added and use the data with no code refactoring and minimal development work. This is especially true in future proofing integrations to take full advantage of A.I. and other business use cases that come next.

Systems and needs are constantly evolving, having smart integrations have gone from a nice-to-have to an absolute must.

Continuing to build point-to-point integrations without re-use and flexibility may seem like the most cost-effective option upfront but is a very outdated practice. Doing so will inflate the cost later as new features are required due to refactoring, increased API flow count, and can increase maintenance costs.